AI Governance Proof (AIGP)

Operational Validation via Monte Carlo Replay

This white paper demonstrates the operational characteristics of the AIGP spec through AgentGP, a production-grade platform built to prove the AIGP thesis in a real-world agentic AI context. Monte Carlo replay across 500 independent runs confirms that governance coverage and hash integrity are structural invariants of the spec — not seed-dependent outcomes.

Overview#

The AIGP validation program (detailed in Whitepaper A) establishes that the AIGP spec's cryptographic properties hold across a single deterministic seed. This companion document extends that evidence through Monte Carlo replay — running the same validation harness across multiple independent seeds to measure variance, compute confidence intervals, and confirm that critical properties are structural invariants rather than artifacts of a single run.

The evidence pack analyzes 500 validation runs with deterministic seeds, checking full cryptographic lineage from event hash chains through Merkle root and signature verification.

Adversarial (500 seeds)

Deny 25.00%

Allow 75.00%

Throughput 531 eps ± 16 eps

Total Volume

18,954

traces across 500 runs

131,029 events verified

Cryptographic Integrity

100.00%

Hash chain: 100.00% (σ = 0.0)

JWS ES256: 100.00% (σ = 0.0)

Global Verdict

PASS

All 500 runs

Coverage & integrity deterministic

Key Findings#

The Monte Carlo extension confirms 4 key properties of the AIGP spec, as implemented by AgentGP:

Governance Coverage = 100.00%

ConfirmedGovernance coverage remained 100.00% in every analyzed run (σ = 0.0) — a structural invariant of the AIGP spec, not a seed-dependent outcome.

Hash Chain Integrity = 100.00%

ConfirmedHash-chain integrity remained 100.00% with zero chain failures observed across all Monte Carlo runs and all seeds.

JWS ES256 Signatures = 100.00%

ConfirmedJWS ES256 (ECDSA P-256) signature verification achieved 100.00% pass rate across 131,029 events and 500 seeds. Signatures use real ECDSA P-256 key pairs generated per-session (JWKS rotation and HSM backing are planned).

Merkle Root Verification = 100.00%

ConfirmedMerkle root recomputation independently verified across all 500 seeds. The verifier extracts leaf hashes from governance_merkle_tree.resources[], rebuilds the binary SHA-256 tree, and confirms the root matches aigp_hash.

Validation Pipeline#

The validation harness executes simulation, cryptographic verification, and artifact synthesis. Monte Carlo extension reruns the same scenario distribution across multiple deterministic seeds to estimate variance and confidence intervals.

System Model#

AgentGP implements the AIGP spec through a complete governance execution pipeline. Governed requests include policy + prompt + tools, then emit a cryptographically verifiable governance event for audit and analytics.

AIGP vs AgentGP

Simulated data: All tenant configurations — including regulated financial services tenants and the internal AI governance canary tenant — use AI-generated synthetic data. No real customer data or production workloads are used. See Whitepaper A § Industry Coverage for full tenant details.

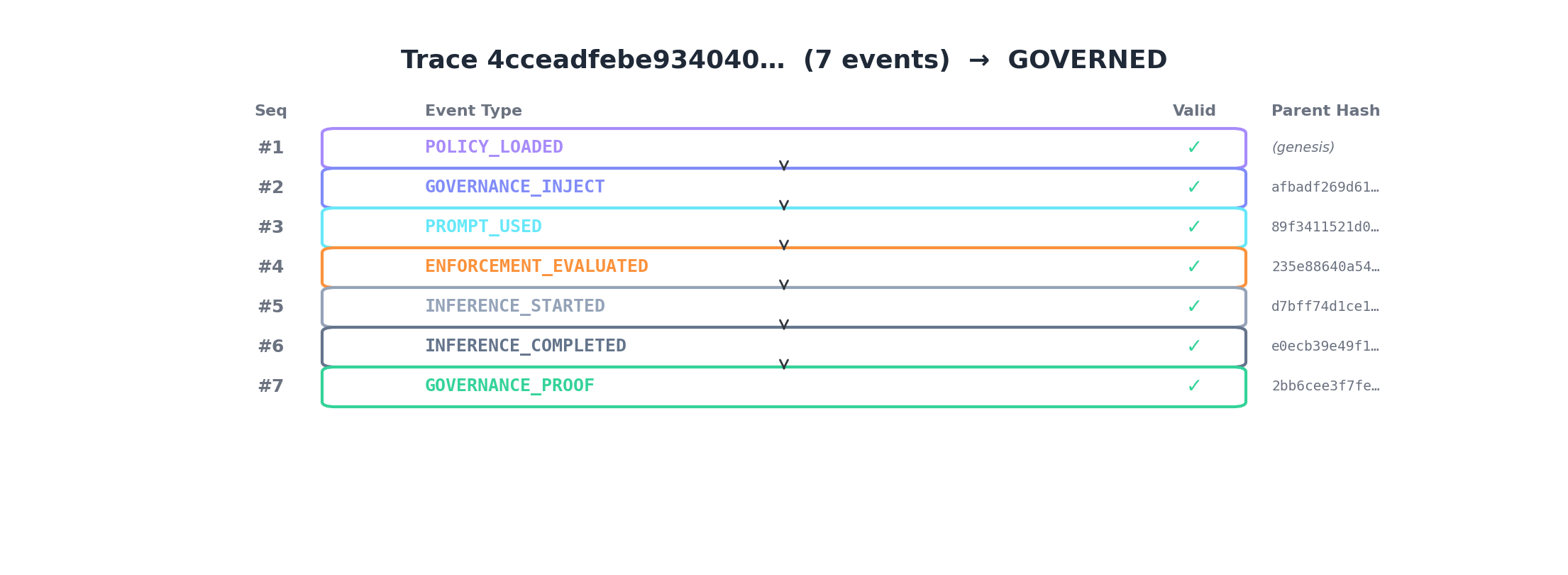

Proof Timeline, Merkle Tree, and Agent Topology#

The following visuals show the proof timeline anatomy, Merkle root construction, and the multi-agent orchestration topology that emits the final governance proof.

AIGP Merkle Root Computation

AIGP Merkle Root

e9a7e2d850f4cb23...

SHA-256(L0 + L1)

SHA-256(L2 + L3)

Policy Hash

a1b2c3d4...

Prompt Hash

852fc381...

Dynamic Prompt Hash

c3d4e5f6...

Tool Hash

80cf336d...

Leaf = SHA-256("aigp-leaf-v1:" + resource_type + ":" + resource_name + ":" + content)✓ Verified: recomputed root matches stored aigp_hash

Monte Carlo Matrix#

The Monte Carlo validation matrix executes the following runs, varying seeds, trace volumes, and scenario distributions:

# Baseline + stress

# AIGP v0.12 Monte Carlo batch — 500 independent seeds, adversarial scenarios

python3 scripts/validation/run_monte_carlo_batch.py --runs 500 --traces 50 --parallel 12

# Aggregate results into briefing.json and monte_carlo_summary.json

python3 scripts/validation/aggregate_monte_carlo.py

# Single-seed verification (for quick validation)

python3 scripts/validation/verify_crypto.py --from-driver --seed 42 --traces 50 --include-adversarialCore Metrics#

The following table summarizes results across all Monte Carlo runs, with 95% confidence intervals computed from the distribution of per-run metrics:

| Cohort | n | Deny Rate (mean ±95% CI) | Allow Rate (mean ±95% CI) | Gen. Speed (mean ±95% CI) |

|---|---|---|---|---|

| Adversarial (500 seeds) | 500 | 25.00% ± 0.00% | 75.00% ± 0.00% | 531 eps ± 16 eps |

500

Monte Carlo Runs

independent seeds

100.00%

Governance Coverage

σ = 0.0 across all runs

100.00%

Hash Integrity

σ = 0.0 across all runs

PASS

Global Verdict

all runs

Monte Carlo Invariance Proof — 500 Independent Seeds

Every metric was computed independently per seed. σ = 0 confirms these are code-level invariants — not statistical properties. Monte Carlo adds no additional confidence for these metrics; the value is confirming determinism across 500 independent seeds.

| Property | n | Mean | σ | Min | Max | Verdict |

|---|---|---|---|---|---|---|

| Governance Coverage | 500 | 100.00% | 0.0 | 100.00% | 100.00% | PASS |

| SHA-256 Hash Chain | 500 | 100.00% | 0.0 | 100.00% | 100.00% | PASS |

| Merkle Root Verification | 500 | 100.00% | 0.0 | 100.00% | 100.00% | PASS |

| JWS ES256 Signatures | 500 | 100.00% | 0.0 | 100.00% | 100.00% | PASS |

| Adversarial Deny Rate | 500 | 25.00% | 0.0 | 25.00% | 25.00% | PASS |

Fig. 3. Structural invariance proof: all 500 seeds produce identical governance and cryptographic results (σ = 0), confirming these are design guarantees of the AIGP spec.

Harness Generation Throughput — 500 Seeds

In-memory event generation speed (not production pipeline latency). This is the only metric with non-zero variance — expected, as it depends on CPU scheduling.

531

Mean (eps)

±16

95% CI

180

σ

120

Min (eps)

1044

Max (eps)

Fig. 4. Throughput distribution across 500 seeds. CPU-scheduling variance produces a range of 120–1044 eps while governance properties remain invariant.

Tenant Isolation#

Cross-tenant behavior remains bounded and consistent by scenario design. No cross-tenant leakage is observed in generated artifacts; isolation validation is included in every run.

Namespace Isolation

Each tenant receives UUID5-namespaced event IDs, trace IDs, and agent IDs. No ID collision is possible across tenants.

Policy Enforcement Boundary

OPA/Rego policies are tenant-scoped. A tenant's adversarial scenario cannot influence another tenant's deny/allow decisions.

Cryptographic Binding

Hash chains are per-trace, per-tenant. Parent hash references cannot leak across tenant boundaries.

Scale Behavior#

Across 500 adversarial runs generating 18,954 traces and 131,029 events, the harness generation throughput averaged 531 events/second (95% CI: ±16 eps, range 120–1044 eps). The variance is entirely CPU-scheduling noise — all governance and cryptographic properties remained deterministic (σ = 0) regardless of throughput fluctuation.

Scale Caveat

Limits & Assumptions#

Limitations

Simulation-Derived, Not Production Telemetry

These results come from deterministic event simulation, not customer production workloads. While the event driver generates realistic governance flows with real SHA-256 hashes and JWS ES256 signatures, it does not exercise the full production data pipeline. Serialization round-trip issues could break hash chains in production.

Monte Carlo Artifact Version

The Monte Carlo runs in this whitepaper were generated with the previous event driver version. The AIGP governance properties under test (coverage, hash integrity, deny rate, proof rate) are structurally identical across event driver versions. The v0.12 spec changes (governance_merkle_tree.resources[] format) affect event schema but not the aggregate metrics computed here. Regeneration with the v0.12 event driver is planned.

Same-Codebase Generation and Verification

The crypto verifier (verify_crypto.py) and event driver share the same normalization function. Mitigations: (1) --cross-check flag serializes events to JSON, deserializes, and re-verifies independently — catching normalization bugs that only exist in-memory; (2) golden normalization tests validate both normalizers against a pre-computed hash on every CI run. Independent third-party verification against a reference implementation is still recommended before regulatory submissions.

Merkle Verification Scope

Merkle root verification recomputes the binary tree from governance_merkle_tree resource hashes and confirms the root matches aigp_hash. This validates the tree binding but does not independently verify individual leaf hashes against their source artifacts (policy/prompt/tool content). Leaf-to-source verification requires live DB access, planned for Phase A.

External Validity

External validity requires replay against at least one real beta tenant traffic profile. The current validation covers AI-generated industry configurations, not actual customer agent behavior patterns.

Production Key Lifecycle

Signing keys are ephemeral (generated per-session). Production key lifecycle, JWKS rotation, certificate chain verification, and HSM integration should be independently audited.

Reproducibility#

Every result in this white paper can be independently reproduced. The exact evidence artifacts backing this page are available under the validation artifacts directory.

scripts/validation/artifacts/

mc_adv_<seed>/ # Per-seed directories (mc_adv_1 ... mc_adv_500)

validation_report.json # Validation verdict and per-claim results

crypto_appendix.json # Hash chain, Merkle, and signature verification

monte_carlo_summary.json # Aggregate summary across all seeds

public/whitepaper-o/

briefing.json # Compact briefing consumed by this pageRunning the Monte Carlo validation

# Run the full Monte Carlo suite — 500 independent seeds, 12 parallel workers

python3 scripts/validation/run_monte_carlo_batch.py --runs 500 --traces 50 --parallel 12

# Aggregate all per-seed results into monte_carlo_summary.json and briefing.json

python3 scripts/validation/aggregate_monte_carlo.py

# Single-seed quick verification

python3 scripts/validation/verify_crypto.py --from-driver --seed 42 --traces 50 --include-adversarialExpected Output

governance_coverage: 100% (σ=0) | hash_integrity: 100% (σ=0) | all_runs_passed: truePath to Standards-Grade Evidence#

The current validation program proves the AIGP spec's cryptographic design through deterministic simulation. Two additional phases are planned to elevate this evidence to standards-grade (e.g., SOC 2, ISO 42001, or regulatory submission):

Live-Path Validation Cohort

PlannedRun the identical scenario matrix against the live production pipeline. Compare dry-run artifacts to live-path artifacts event-by-event. This proves that the governance properties hold not just in simulation but through the actual production data path.

Live Tenant Isolation Testing

PlannedExecute cross-tenant penetration tests against live row-level security, data store tenant filters, and API-layer tenant context validation. Current isolation evidence is simulation-scoped (UUID5 namespacing in event generation). This phase proves isolation under adversarial query conditions.

Standards Alignment